Cybersecurity applications are everywhere these days.

We can choose from a huge range of EDR products, firewalls, VPN services, vulnerability scanners, code verifiers, network packet filters, data leakage protectors, automated software testing tools, behavior blockers, allowlists, blocklists, reputation monitors, ransomware detectors, AI-based threat classifiers, and many others.

Many of these tools enjoy privileged positions in our digital lives, not only having access to everything we do on our computers or send across the network, but also having the right to block or manipulate what we send and receive, often in ways that aren’t obvious.

Corporate firewalls, for example, are often primed with special company-wide cryptographic keys that allow them to decrypt and peek at everything we do, reading our email, monitoring our browsing, tracking our internet banking, and more.

This sort of automated monitoring is usually well-meant, with the underlying aim of “keeping the bad stuff out and the good stuff in,” so it’s legal and expected in many countries, but there are obvious downsides.

For example, what if an insider, or a contractor providing IT or security services, turns rogue and uses the firewall to stalk or spy on other staff?

What if the firewall has an exploitable software vulnerability that allows remote attackers to subvert it, injecting malware that surveills the firewall’s own surveillance?

Many, perhaps most, firewall brands have suffered from this sort of unauthenticated remote code execution (RCE) hole in recent years, sometimes discovered by cybercriminals and exploited in so-called zero-day attacks.

Reputable brands generally patch problems of this sort quickly once they’re known to the Good Guys, but zero-days get their name from the fact that there were zero days during which even the most zealous sysadmins could have patched their systems before the attacks started.

These days, with ever-more emphasis on automating the writing, testing and releasing of ever-more complex software products, there are ever more opportunities for attackers to subvert the very security tools we use in the hope of keeping our users safe.

A recent and informative example comes from a popular open-source cybersecurity toolkit called Trivy, created by a company called Aqua Security, which aims to help software developers improve the resilience of their products:

Trivy is a comprehensive and versatile security scanner. Trivy has scanners that look for security issues, and targets where it can find those issues [․․․]Trivy supports most popular programming languages, operating systems, and platforms.

Trivy includes related GitHub projects called setup-trivy, to configure your GitHub environment to use Trivy, and trivy-action, which you can plug into GitHub to perform security scans automatically in what’s known as a software development pipeline.

Pipelines represent a series of operations that you want to perform regularly and repeatably during the development process, such as, “Review new code; scan for security holes; recompile everything; re-do existing tests; and report whether everything seems to work as it did before.”

In a typical CI/CD development pipeline, where CI/CD is short for continuous integration/continuous delivery, it’s useful to automate as much of the process as you can, for example so that every proposed change by every developer can be tested even before it’s reviewed by a human.

Automatically rejecting code updates that no well-informed human would accept gives those human experts more time to spend on higher-order tasks, and helps them be correspondingly less likely to make mistakes due to time pressure.

Problems that security scanners such as Trivy may be able to seek out and detect automatically include:

If the media player above doesn’t work, try clicking here to listen in a new tab.

But what about automated code review processes that are themselves affected by cybersecurity bugs or rogue code?

In this story, Trivy’s automated processing was tainted with malware.

Ironically, the attackers in this case didn’t just compromise Trivy’s main security and vulnerability scanning software (available in source code and binary form from the company’s trivy GitHub project).

They also poisoned the related setup-trivy and trivy-action projects, which act as what you might call the “glue” to set up your GitHub pipelines to carry out various Trivy scans on a regular basis.

The implanted setup-trivy and trivy-action malware, implemented as scripts written in Bash and Python, therefore targeted the entire GitHub environment in which your projects were hosted, and perhaps even the laptops or servers used by developers contributing to the projects, if those computers were used for any of the security scanning tasks invoked by trivy-action.

Any time any project activity triggered any sort of Trivy scan, the injected malware ran first, even if the Trivy scan ultimately ended up finding nothing wrong and raising no alarms.

The implanted malware, which includes a Python-coded component tagged by its creators as The Team PCP Cloud stealer, is programmed to carry out a wide range of criminal reconnaissance and data-plundering activities, depending on how the targeted trivy-actions were set up, including:

All of this data stealing happens every time a trivy-action is triggered by any activity in the CI/CD pipeline, before the relevant Trivy scan runs, and without affecting the apparent correctness of the security and vulnerability checks carried out.

Any collected data harvested in any run of the malware then gets encrypted by the attackers and exfiltrated (a fancy word for stolen), in one of two ways.

Firstly, the malware tries to upload the rogue file to a server sneakily named scan.aquasecurtiy.org, which uses a deliberate mis-spelling of the name aquasecurity, the company that publishes the Trivy project.

If that fails, perhaps because of firewall or blocklist rules that prevent the upload from working, the malware cheekily tries to upload the stolen data to a fake repository in the victim’s own GitHub account, using the innocent-looking project name tpcp-docs (named after Team PCP – see image above) to hide in plain sight.

Annoyingly, even if you spot the fake repository created by the malware and the file of stolen data called tpcp.tar.gz, you won’t be able to examine the contents the rogue file to see what the crooks got hold of.

That’s because the encryption uses a ransomware-style process when packaging the data for exfiltration:

AES-256-CBC symmetric cipher.RSA-4096 public key that’s contained within the malware.tpcp.tar.gz.RSA-4096 private key, can recover the AES key, decrypt the archive and extract the stolen data.According to an ongoing post-incident analysis by Aqua Security, the illegal modifications to the company’s GitHub repository were carried out by an attacker who had somehow acquired access credentials with the authority to modify the affected projects.

Ironically, those credentials seem to have been acquired using credentials that were originally stolen at the start of March 2026, in an earlier compromise at Aqua Security in which one of the company’s Visual Studio Code extensions was poisoned.

How those first credentials were stolen is not clear, but attackers have many tricks at their disposal, including: phishing; bribery; keylogging or data-stealing malware already implanted on a developer’s computer; leakage by accidental upload of secret data; buying up access from so-called initial access brokers; and more.

Aqua Security noted that the company did attempt to change all compromised credentials after the first breach, but that “the process was not atomic.”

This is fancy jargon that means they didn’t revoke everyone’s access in one go, for example by kicking out all active users, taking their systems offline temporarily, and invalidating all currently-active credentials or every sort in a single, all-or-nothing process. (Atomic in this context simply means “indivisible.”)

In other words, attackers who already had access to the system, but who spotted that their existing login credentials had been blocked, had time to use the still-active authentication tokens in their current session to generate fresh credentials to get them back in next time.

That’s a bit like coming home, seeing that you left your key in the lock, and disturbing burglars who used the misplaced key to break in.

If you assume that the burglars ran off when they heard you coming home, and you then promptly call a locksmith to replace the old lock, you’d expect to be safe in future.

Except that if one of the burglars had hidden indoors a closet while the lock was being changed, instead of running off, they could sneak out of their hiding place later on, and quietly grab a copy of the brand new key on their way out.

The attack seems to have started early in March 2026, and, as previously mentioned, Aqua Security’s initial attempts to kick out the cybercriminals to contain the attack were incomplete and unsuccessful.

So, if you use Trivy in your GitHub pipelines, be sure to read Aqua Security’s incident response page, and to revisit it regularly, because it is still being updated at the time of writing [2026-03-23], as the company digs deeper into the incident and finds out more about what happened.

Many Trivy users seem likely to have been affected, because the attackers sneakily modified almost all versions of setup-trivy and trivy-action in Aqua Security’s official releases.

You should, at the very least:

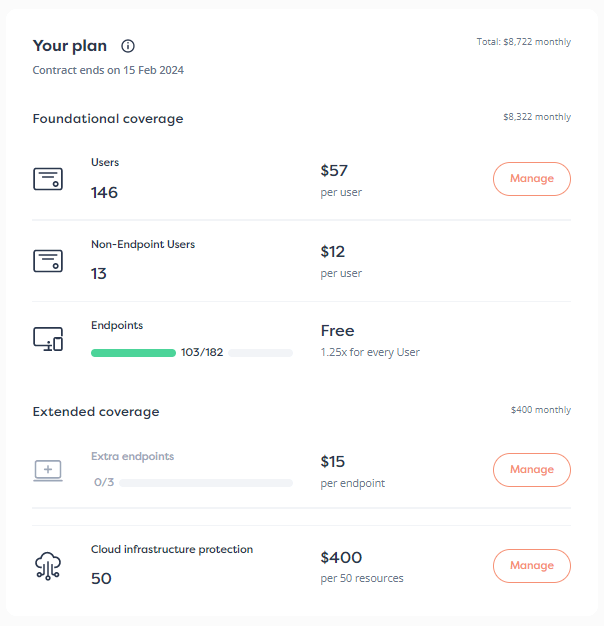

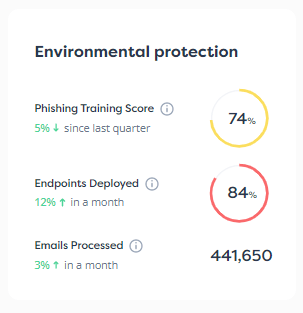

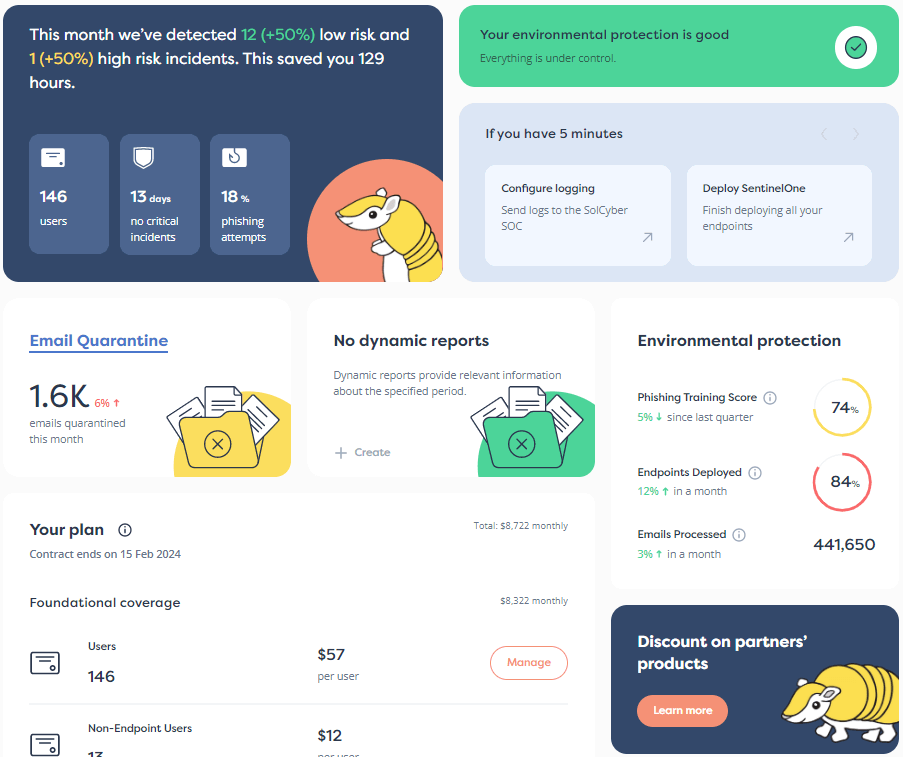

aquasecurtiy.org (note the deliberate mis-spelling of aquasecurity), or the presence of projects or files named tpcp-docs and tpcp.tar.gz in your GitHub repositories.trivy-actions ran on local computers where the file-grabbing parts of the malware could have run.X.Y.X, where X, Y and Z are numbers, to vX.Y.Z, where v is the lower-case letter V, so that all old-style software builds have visibly different names from the new ones.Why not ask how SolCyber can help you do cybersecurity in the most human-friendly way? Don’t get stuck behind an ever-expanding convoy of security tools that leave you at the whim of policies and procedures that are dictated by the tools, even though they don’t suit your IT team, your colleagues, or your customers!

Paul Ducklin is a respected expert with more than 30 years of experience as a programmer, reverser, researcher and educator in the cybersecurity industry. Duck, as he is known, is also a globally respected writer, presenter and podcaster with an unmatched knack for explaining even the most complex technical issues in plain English. Read, learn, enjoy!

By subscribing you agree to our Privacy Policy and provide consent to receive updates from our company.