Hackers Are Just Another Insider Threat

Hackers are no longer just external threats—they’re increasingly acting as insider threats by exploiting identities rather than simply targeting endpoint devices.

We’ve all encountered headlines talking up a brand new strain of “undetectable malware”, because stories of that sort make exciting copy, and not just in the IT or cybersecurity media.

We’ve probably all clicked through, too, simply because those words sound important and intriguing, despite the obvious contradiction they convey.

After all, if the malware in question really were undetectable, the story couldn’t have been written, because no one would have known that the malware existed in the first place.

Sometimes, apparently without any sense of irony, “undetectable malware” stories will turn out to have been written and pitched to the media by cybersecurity researchers or vendors who quickly admit that the malware they’re talking about is detectable after all…

…because they’ll insist not only that they discovered it, but also that they just happen to have a uniquely new and shiny cybersecurity tool, available right now, that will swiftly identify it and remove it.

Clearly, “undetectable” is one word that doesn’t literally apply to malware of this sort, and companies that play the undetectability card too seriously or too often should obviously be treated with caution.

But that doesn’t mean that malicious software can’t be hard to detect, or, perhaps more worryingly, hard to distinguish from legitimate software, in the midst of which it can hide in plain sight.

It’s well worth mastering the myth of so-called “undetectable malware” by looking at some of the tricks and techniques that cybercriminals use to make the job of threat hunting harder, whether that hunting is done by automated tools, real-life humans, or both.

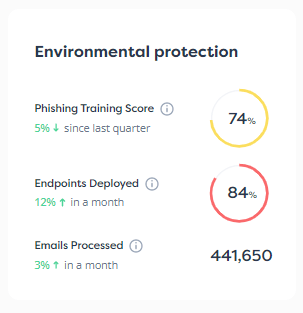

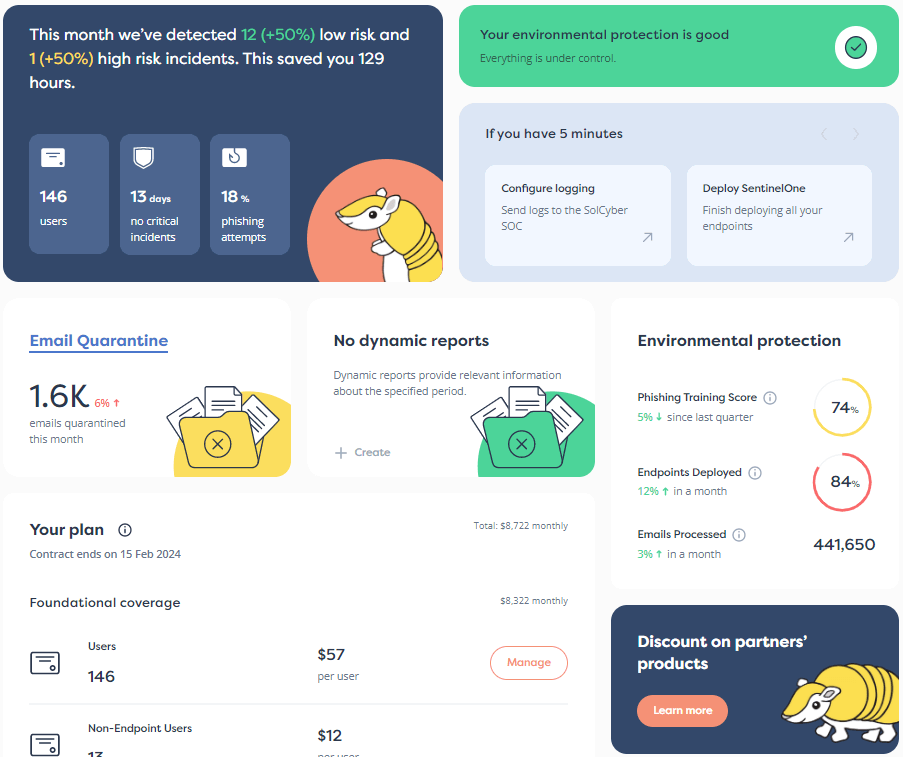

If you want to run your own menagerie of cybersecurity software and your own SOC (Security Operations Center), you’ll need to keep on top of all of these issues; if you plan to choose a Managed Security Service Provider, you’ll want to choose one that can explain and elucidate the issues in plain words if tricky malware of this sort shows up in your ecosystem.

The biggest problem with malware you haven’t spotted yet is that if you don’t even know it’s there, you can’t set about getting rid of it; you can’t measure what trouble it’s caused, if any; and you can’t devise a plan so you are well-placed to keep it out next time.

(There usually is a next time, because cyberattackers don’t give up on old techniques whenever fashionable new attack tools show up in the cyberunderground: they keep the old tools just as enthusiastically as they adopt the new ones, thus ensuring you the worst of both worlds.)

I’ve therefore broken down hard-to-find malware, which is what I’ll call it instead of “undetectable”, into four classes, and I’ve come up with an easy-to-remember list of techniques that I’m calling The Four Fs.

Hard-to-find malware usually fits into one or more of these categories:

The jargon terms that are already known in the industry differ from these, of course, so I’m not suggesting you use them formally in your technical reports or your media briefings.

But I think you’ll find them handy and instructive when you need to explain cybersecurity issues to your friends, family, colleagues, CxOs, suppliers, customers and anyone else who wants to understand why cybersecurity matters, and how to get better at it.

Here goes!

The popular jargon term for this is stealth, also often referred to by the jocularly serious name anti-anti-virus, because it aims to fight back against the software you installed to fight back against it.

The idea is that, once active, furtive malware will go out of its way to ‘cloak’ itself, with the aim of evading the tools and techniques commonly used to spot that something suspicious is going on.

Stealthy malware aims to present what looks like a clean bill of health by showing you what you’d really like to see, in the hope that this will discourage you from looking any further, or, if you’re already suspicious, that it will encourage you to spend ages looking in all the wrong places instead.

Fascinatingly, the very first virus that ever became widespread for the IBM PC – the progenitor of the Windows computers we use to this day – introduced this sort of subterfuge, and it has been an annoyingly effective attack tool ever since.

That early virus was known as Brain, and it spread via floppy disks, which everyone used because they were the only reliable way of moving data and programs between PCs back then.

(Imagine a USB stick, but 5.25 inches wide, flexible and easily damaged, desperately slow, and typically holding just 360KB, which is enough to store about 1/20th of a second of video from your mobile phone, or the top left corner of a selfie taken with your front-facing camera.)

The virus was designed to travel even on freshly-formatted disks by storing itself in the boot sector, the first 512 bytes of the disk that was accessed and executed automatically when the computer was turned on so it could start up by itself.

When it wasn’t active, Brain was happy to be noticed, and went out of its way to brag about its creators (they weren’t breaking any laws at the time), who put their real address and phone numbers into the boot sector:

But once the virus was active and using your computer as a vehicle for infecting every disk you inserted, it loaded a special chunk of code that intercepted any attempts by DOS, the operating system of the day, to read in the boot sector and inspect it.

Brain redirected the disk access to a hidden copy of the original boot sector instead, thus giving the impression that the disk and your computer were clean, leaving you with a false sense of security.

Modern computers don’t start up via the boot sector any more, so this sort of boot sector trickery is little use these days.

Instead, they boot from a special disk partition known as the EFI System Partition which is protected against tampering by default under Windows 11 thanks to a hardware feature known as Secure Boot.

But that hasn’t stopped contemporary malware authors from coming up with a wide range of stealthy anti-anti-virus tricks of their own, aimed at making detection harder and thereby helping their malware to evade detection for longer.

For example, many modern endpoint security tools rely on Microsoft security features whereby the operating system kernel itself reports to anti-malware software whenever potentially dangerous actions take place, so they can decide whether to intervene.

This includes events such as new processes firing up, registry entries being examined or changed, and software attempting to peek at or modify the behaviour of other programs.

(If you’re interested in learning more about these low-level monitoring tools, use your favourite search engine to look for items such as PsSetCreateProcessNotifyRoutine, ObRegisterCallbacks, and Event Tracing for Windows, or ETW.)

Malware that can stealthily manipulate the settings of an endpoint software’s monitoring callbacks, so that the software doesn’t notice that its protection levels have changed, can furtively conduct all sorts of malicious activities without being spotted.

Sneaky malware can even reactivate those settings once it has completed its undercover work, such as injecting unauthorised code into the memory areas reserved for an already-trusted program, so that the system appears to be correctly protected to anyone who decides to check later on.

In other words, even though stealth malware isn’t truly undetectable, as we explained above, software tools may need to take special care in order to detect it reliably, for example by adding anti-anti-anti-virus tricks of their own.

Likewise, human threat hunters needs to keep up with an ever-increasing range of attack symptoms to watch out for, so that they can spot the very tricks now being used to stop them spotting the tricks they knew to look out for last year or last month.

If you’re looking for a job that never lets up, won’t give you any vacation time, and will confiscate all your weekends, running a SOC as an extra part of your day-to-day work when cybersecurity isn’t your core business is, sadly, a good place to start.

Many traditional threat detection tools and techniques rely on being able to ‘pin down’ malware at some point in its active life by assuming that it will end up stored in a disk file along the way, even if only temporarily.

For example, if you launch a program or examine a document with your browser, it almost always gets saved to a temporary file on disk first and then executed or opened from there, even if you didn’t choose the [Save] option.

Because the browser needs to finish writing the file to disk (so that it knows it has received the whole thing) before it hands it over to the operating system to launch it, this makes an ideal staging and interception point for software that’s programmed to detect and block threats.

Most endpoint software is able to ‘freeze’ access to files temporarily while it scans them for malware, checks them against a list of unwanted files, or otherwise vets them for suitability.

After that, it typically either ‘unfreezes’ the file to release it for use by other programs, including the operating system itself, or denies access in a way that the program trying to use it can’t bypass, including (if so configured) by deleting the file altogether so no one can use it.

But what if malware doesn’t follow this path, and instead uses an innocent-looking program to download a binary chunk of malicious code straight into memory, without drawing attention to it by saving it to disk, and just executes it directly?

This is how fileless malware works, and malware writers quite deliberately came up with the idea in order to sidestep file-based detection tools, thus creating malware that was harder, and more time-consuming, to detect reliably.

In fact, we’ve previously written about a famous fileless malware sample from way back in 2003 known as SQL Slammer.

Slammer arrived in a single network packet of under 400 bytes, wormed its way directly into memory in an unpatched Microsoft SQL Server, immediately gained remote code execution (RCE) straight from memory, and set about using the network fucntion sendto() to blast itself back out to as many other computers on the local network and the internet as it could reach:

The virus was not only unstoppable by security tools that relied on finding malware by waiting until it was written to disk, but also left no forensically recoverable copies of itself behind in disk files from which threat hunters could later recover it for analysis.

Surprisingly, perhaps, a lot of modern malware that’s excitingly trumpeted as “fileless and therefore undetectable” by headline hunters is much less fileless in practice than SQL Slammer was 20 years ago.

For example, malware that relies on an attack chain that unfolds in multiple stages will often be dubbed “fileless” even if only one of the stages happens filelessly and all the other stages rely on creating suspicious intermediate files that can be detected and blocked traditionally.

Nevertheless, fileless malware represents a tricky and hard-to-handle problem for threat hunters, not least if the final stage of the attack happens directly from memory, so that no copy of the actual code that ran remains behind afterwards.

If you miss an attack and get hit by crooks, it’s handy to know how the malware arrived and was set up for execution by its non-fileless parts, because that helps you plan your defences for next time.

But it’s troublesome if there is simply no way to dig into the code that ran in the final step of the attack, because that makes it much harder to decide what happened, and what damage, if any, you need to look out for.

If you want to stretch the definition somewhat, you can even think of ransomware as having a fileless component in the form of the encryption keys it uses, which are generated randomly in memory, but never written in plaintext form to disk, so they can’t subsequently be recovered.

Even if you spend days or weeks working your way through every raw disk sector hoping to find some recoverable remnants of the cryptographic keys you need…

…you won’t.

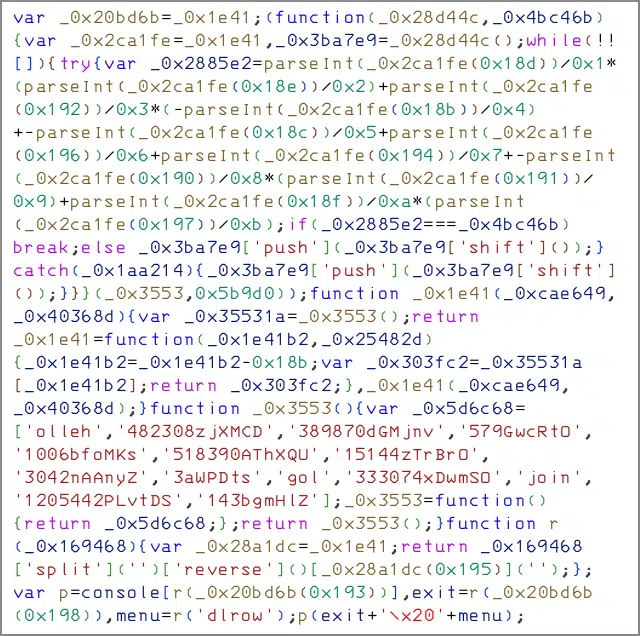

Cybercrime trickery based on deliberate layers of complexity has been around since the earliest days of malware, and it aims to make malware hard to detect by disguising its code through various sorts of artificial modification and obfuscation, a word that comes from the Latin fuscus, meaning dark or dimly-lit.

We looked at this sort of ‘self-disguise’ in a recent two-part series entitled Script Malware: When simple things turn out hard, where we pointed out just how easily code that is trivial to understand in its original form can automatically be converted into an equivalent but fiendish-to-unravel form before it is unleashed.

For example, we showed that this one-line JavaScript program:

…could be rewritten without needing any coding skills simply by feeding it into an obfuscation tool that would convert it into a bizarre-looking program that did exactly the same thing:

Similar scrambling and obfuscation techniques have existed for decades even for program code that isn’t as easy to rewrite and modify as text-based scripts are, including standalone machine code fragments (often referred to as shellcode), and program files such as .EXE and .DLL files on Windows.

In the early days of virus writing, self-mutating or polymorphic toolkits became an obsession for some programmers in the counterculture, who competed to create self-scrambling tools that other virus writers could incorporate into their own code to make their malware harder to detect.

These tools used randomised encryption, instruction substitution, code replacement and even virtual machine techniques to rewrite malware code that was easy to match and detect in its original form into potentially millions or billions of complicated permutations.

Even back in the early 1990s, threat detection tools that relied only on so-called patterns or signatures (fixed strings of known-bad code sequences that could be matched inside suspicious files) were out of luck, thanks to code scrambling toolkits with dramatic names such as Dark Avenger’s Mutation Engine (MtE), Dark Angel’s Multiple Encryptor (DAME), and Christopher Pile’s Simulated Metamorphic Encryption Generator (SMEG).

As a historical aside, Pile, who was based in England, was the first person arrested and convicted for malware-related offences under the UK’s Computer Misuse Act of 1990, still that country’s “main legislation that criminalises unauthorised access to computer systems and data, and the damaging or destroying of these.” (Pile was sent to prison for 18 months in 1995.)

Paradoxically, detecting files that have been scrambled by tools of this sort is often surprisingly easy, because the fiendishness of their obfuscation make their code stand out as unusual and unnatural.

Annoyingly, however, digging inside the obfuscation to find out what the scrambled code actually does is often fiercely complicated, which makes analysing and understanding it harder and more time-consuming for malware scanners and threat researchers alike.

Worse still, a surprising amount of legitimate, commercial software such as copy protection, anti-cheat and penetration testing tools include or use these very techniques to ‘protect’ or otherwise ‘benefit’ their users.

This means that automated threat-hunting tools can’t directly use the presence of suspiciously scrambled code to detect and isolate malicious programs, or else they will end up blocking legitimate software that might be business-critical.

Similarly, human threat analysts often need to apply an eclectic and esoteric mixture of art and science to unravel code that looks bad at first sight, in case it ultimately turns out to be both legitimate and necessary.

The last sort of hard-to-detect malware sample we’ll look at today is what I call the faked file.

This covers malware that doesn’t so much disguise itself as pass itself off as beyond suspicion by carrying VIP identification credentials of apparently unimpeachable quality.

The idea here is for malware to convince the operating system not to bother examining it in advance, or to be suspicious of it while it is running, on the grounds that it is trusted code and is serving a gallant and vital purpose, no matter how dangerously it might seem to behave.

You can think of this like fitting an unauthorised private car with police decals, emergency lights and warning sirens, and then masquerading as an official vehicle in order to avoid getting pulled over for speeding or blasting past other cars on the wrong side of the road.

Earlier, for example, we mentioned malware stealth techniques on Windows that involve temporarily tweaking the set of system notifications that security software receives.

The idea is to buy just enough time for the malware to perform a critically dangerous operation without being noticed, and then to restore the safe-and-secure settings to evade suspicion.

That sort of manipulation is surprisingly easy for malware that can load up what’s known as a kernel driver, a type of ‘software assistant’ that runs right inside the operating system; it’s much harder for malware to do so from outside the kernel, because the kernel itself keeps regular code and kernel code apart.

Thaat’s because kernel-level software has significantly more power, and can be significantly more dangerous, than regular software, even when that software is running with Administrator privileges.

Many years ago, in an effort to control this problem, Microsoft decided that kernel drivers shouldn’t be allowed to run unless they had been vetted and digitally signed by Microsoft itself.

Apple and Google operate a similar barrier to entry via the App Store and Google Play respectively, where apps won’t be admitted for download, whether paid or free, without first getting the company’s imprimatur in the form of a digital signature that act as a credential to prove that they’ve been tested and approved by the vendor.

As useful as these checks and balances may be (and malware attacks involving dangerous kernel drivers have indeed gone from being an everyday problem on Windows to a rarity), there’s always that worrying question, “What if a rogue program does an end-run round the certification process and gets a digital seal of approval anyway?”

Sadly, even though that sort of thing is comparatively unusual, it happens annoyingly often, and it creates a very dangerous situation in which malware acquires a formal badge of authority that means it’s free and clear to wreak havoc, and won’t be detected, or stopped, or shut down even if it does things that would normally set off alarm bells loud and clear.

In 2022, for instance, and again in 2023, Microsoft published advisories that it had wrongly signed (or allowed someone else to sign on its behalf – it’s not entirely clear how this happened) a bunch of kernel drivers that were built specifically to allow malware creators to deploy attacks tools that would operate from the inside the kernel.

Similarly, rogue apps turn up with annoying regularity, if fortunately not with high frequency, in both Apple’s App Store and Google Play.

Cybercriminals know that it’s worth putting an enormous amount of effort into figuring out how to trick the vetting processes of Apple or Google, because they only need to succeed once to acquire what is effectively an overarching certificate of entitlement to install malware on your device.

Human threat hunters need to take a ‘zero-trust’ approach, to assume that no software can truly be beyond suspicion, and to maintain a level of technical and intellectual vigilance that automated tools can’t, and that regular users and IT staff simply don’t have the time for, even if they have the necessary expertise.

• “Undetectable malware” must be detectable if it’s already been identified and written about. So, don’t feel dissuaded or discouraged when you see PR headlines trumpeting that some new strain of “undetectable malware” is out there and preying on us right now, and don’t let yourself get frightened into buying yet more security tools from the vendor who is talking it up.

• Keeping on top of hard-to-handle malware is a full-time job in its own right. Doing it yourself, or relying on a set of automated software tools to do it for you, probably isn’t going to work out. Consider a Managed Security Service that’s run by humans, for humans, to help you with the complex and sometimes counterintuitive parts of threat detection and response, where things that look dangerous may turn out to be legitimate, yet things that are genuinely dangerous may look unimportant at first glance.

• Be prepared to change your approach to cybersecurity as new anti-anti-malware tricks evolve. Look for a cybersecurity partner who will go the extra mile and let you talk to knowledgeable humans to get help with anything to do with cybersecurity, not just to deal with incident-related queries.

Paul Ducklin is a respected expert with more than 30 years of experience as a programmer, reverser, researcher and educator in the cybersecurity industry. Duck, as he is known, is also a globally respected writer, presenter and podcaster with an unmatched knack for explaining even the most complex technical issues in plain English. Read, learn, enjoy!

Featured image by Jeremy Bishop on Unsplash

Hackers are no longer just external threats—they’re increasingly acting as insider threats by exploiting identities rather than simply targeting endpoint devices.

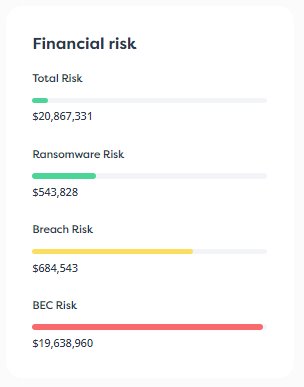

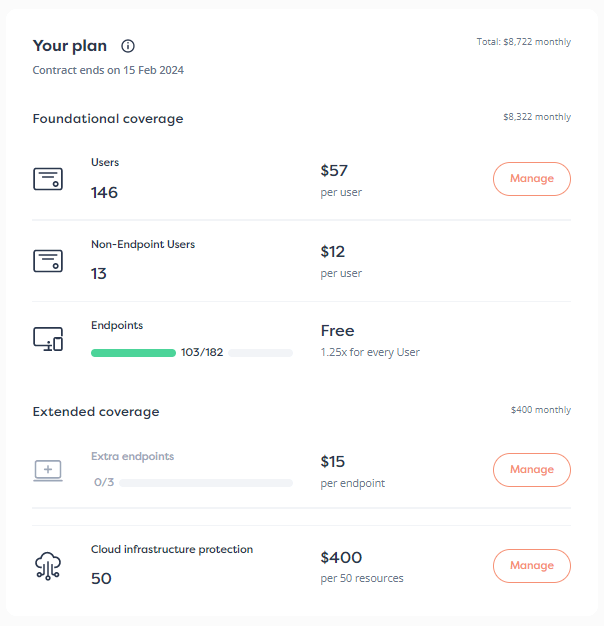

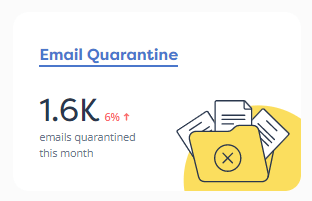

Data from the FBI’s IC3 (Internet Crime Complaint Center) painted a stark picture of digital crime in 2022. The center recorded 800,944 complaints, leading to financial losses exceeding $10.3 billion. The increased sophistication in these crimes is driven, in part, by advancements in artificial intelligence technology. Many companies are still inadequately equipped to counter these escalating cyber threats because of the following factors: All of the above adds up to an immense investment required from SMBs to keep cybercriminals out […]

Learn about AD attacks and how hackers are getting access into your network.

By subscribing you agree to our Privacy Policy and provide consent to receive updates from our company.